This is the multi-page printable view of this section. Click here to print.

Fundamentals

1 - Intro to DL

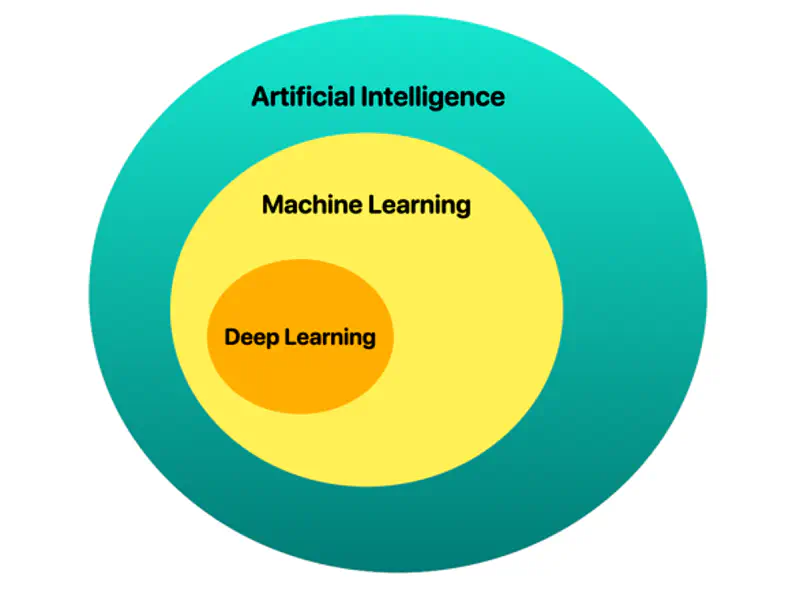

📘 Deep learning is a subset of AI and machine learning that uses multi-layered artificial neural networks to simulate human-like learning, analyzing vast data to identify complex patterns, such as recognizing objects in photos, detecting medical anomalies, or processing natural language, like LLMs.

💡 The ‘deep’ in ‘deep learning’ stands for the idea of successive layers of representations.

🐋 It is “deep” because it uses many layers (often hundreds) to automatically extract, transform, and map data features into predictions, surpassing traditional machine learning in handling unstructured data.

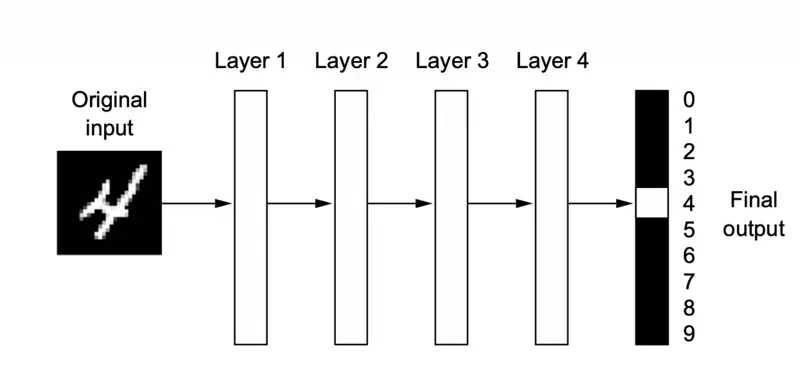

🖼️ A deep neural network for digit classification

📘 Deep learning is a multistage way to learn data representations.

💡 It’s a simple idea - but, as it turns out, very simple mechanisms, sufficiently scaled, can end up looking like magic.

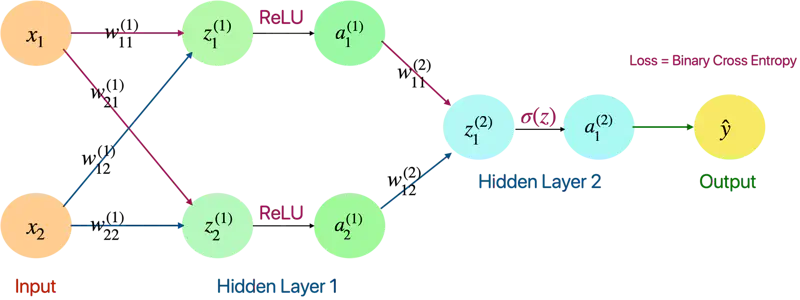

🖼️ A fully connected neural network

Feature Engineering: Deep learning completely automates what used to be the most crucial step in a machine learning workflow, making problem-solving much easier.

Read more about Feature Engineering

Performance: Better performance for solving many kinds of problems, especially with unstructured data.

Although deep learning is a fairly old subfield of machine learning, it only rose to prominence in the early 2010s.

- Perceptron (1957), Frank Rosenblatt

- Back Propagation (1986), Geoffrey Hinton

- LSTM (1997), Sepp Hochreiter and Jürgen Schmidhuber

Some of the important algorithms such as back propagation, long short term memory (time series) of deep learning were well understood before 2000s and have barely changed since then.

Break Through Moment

It began with a win in academic image-classification competitions with GPU-trained deep neural networks.

🚀 But the watershed moment came in 2012, with the entry of Geoffrey Hinton’s group in the yearly large-scale image-classification challenge ImageNet (ImageNet Large Scale Visual Recognition Challenge, or ILSVRC for short).

ImageNet

The ImageNet challenge was very difficult at the time, consisting of classifying high-resolution color images into 1,000 different categories after training on 1.4 million

images.

- In 2011, the top-five accuracy of the winning model, based on classical approaches to computer vision, was only 74.3%.

- Then, in 2012, a team led by Alex Krizhevsky and advised by Geoffrey Hinton was able to achieve a top-five accuracy of 83.6% — a significant breakthrough.

- Since then, the competition has been dominated by deep convolutional neural networks.

Note: By 2015, the winner reached an accuracy of 96.4%, and the classification task on ImageNet was considered to be a completely solved problem.

Driving Forces

- Hardware

- Datasets and Benchmarks

- Algorithmic Advances

Note: The real bottlenecks throughout the 1990s and 2000s were data and hardware.

Experiments and Engineering

Because the deep learning field is guided by experimental findings rather than by theory, algorithmic advances only become possible when appropriate data and hardware are available to try new ideas

(or to scale up old ideas, as is often the case).

Machine learning isn’t mathematics or physics, where major advances can be done with a pen and a piece of paper.

🚀 It’s an engineering science.

Graphical Processing Unit (GPU)

Throughout the 2000s, companies like NVIDIA and AMD invested billions of dollars in developing fast, massively parallel chips (GPUs) for video games to render complex 3D scenes in real time on the computer screen.

This investment came to benefit the scientific community when, in 2007, NVIDIA launched CUDA, a programming interface for its line of GPUs.

Deep neural networks, consisting mostly of many small matrix multiplications, are also highly parallelizable using GPUs.

💡 AI is sometimes heralded as the new industrial revolution.

If deep learning is the steam engine of this revolution, then data is its coal: the raw material that powers

our intelligent machines, without which nothing would be possible.

🌐 When it comes to data, in addition to the exponential progress in storage hardware over the past 20 years (following Moore’s law), the game changer has been the rise of the internet, making it feasible to collect and distribute very large datasets for machine learning.

Today, large companies work with image datasets, video datasets, and natural language datasets that could not have been collected without the internet.

In addition to hardware and data, until the late 2000s, we were missing a reliable way

to train very deep neural networks.

As a result, neural networks were still fairly shallow, using only one or two layers

of representations; thus, they weren’t able to shine against more-refined shallow methods such as SVMs and Random Forests.

- The key issue was that of gradient propagation through deep stacks of layers.

- The feedback signal used to train neural networks would fade away as the number of layers increased.

🎯 This changed around 2009–2010 with the advent of several simple but important algorithmic improvements that allowed for better gradient propagation:

- Better activation functions for neural layers, such as ReLU.

- Better weight-initialization schemes, such as, He (2015) and Xavier initialization (2010).

- Better optimization schemes, such as RMSProp (2012) and Adam (2014).

- Advanced ways to improve gradient propagation were discovered, such as batch normalization (2015), residual connections (2015).

2 - XOR Problem

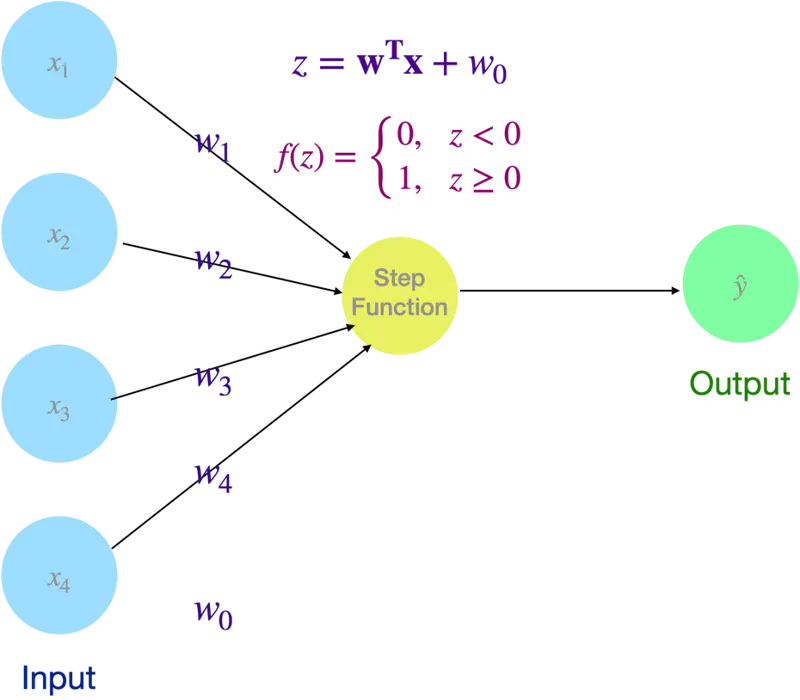

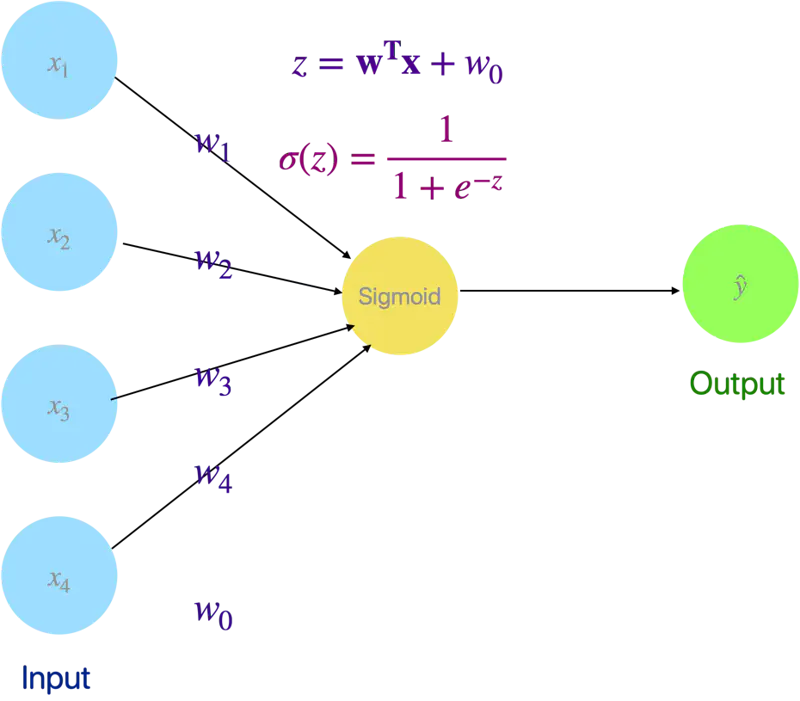

Simplest form of an artificial neural network, acting as a single-layer binary classifier that categorizes input data into one of two groups.

It serves as a mathematical model of a biological neuron, receiving multiple signals (inputs), weighting their importance, and deciding whether to ‘fire’ (output 1) or stay ‘inactive’ (output 0).

🖼️ Perceptron

💡 Even Logistic Regression is a simple neural network with a sigmoid activation (instead of step function as in Perceptron).

🖼️ Logistic Regression as a Neural Network

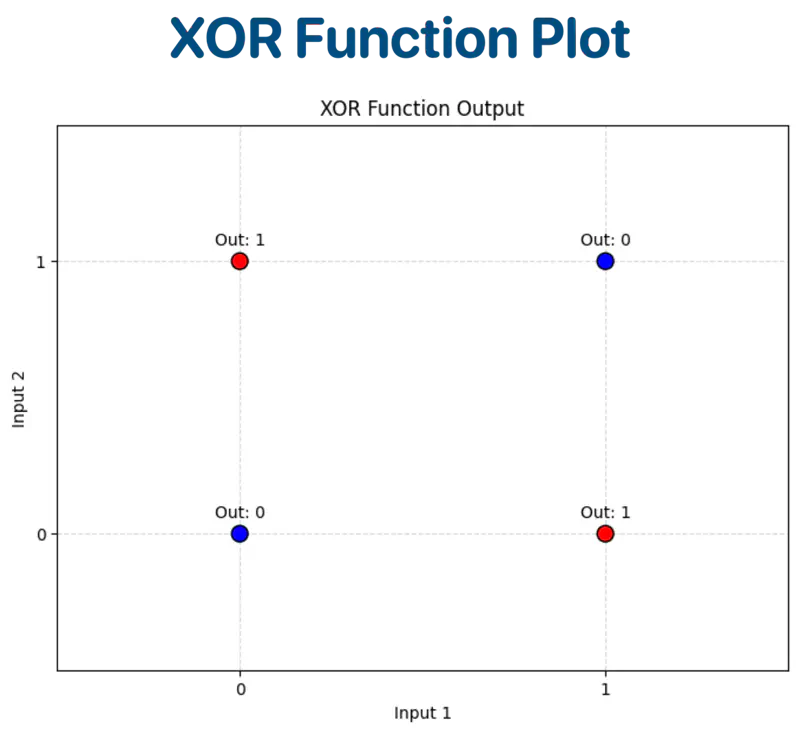

XOR Function:

| Input A | Input B | Output (A ⊕ B) |

|---|---|---|

| 0 | 0 | 0 |

| 0 | 1 | 1 |

| 1 | 0 | 1 |

| 1 | 1 | 0 |

Let’s plot the input and output on a graph for visualization.

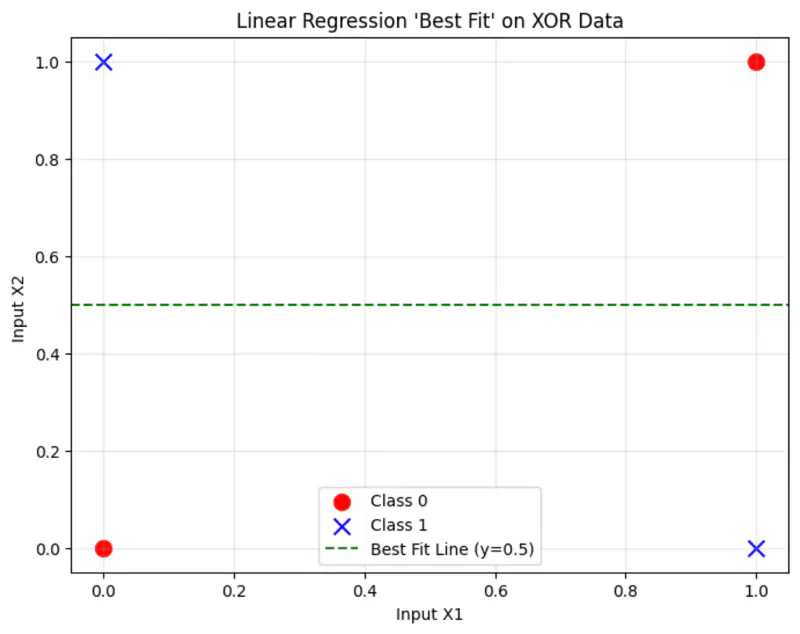

Linear Regression

The cost function:

where, \(\hat{y_i} = \mathbf{w^Tx} + w_0\)

Solving the normal equations, we get:

\(\mathbf{w = 0} , w_0 = 0.5\)

This implies, whatever is the input, we always get 0.5 as output,

because linear regression is trying to fit the best line to the data, which in this case will be mid-way between the points.

And, that definitely is not the correct solution.

Logistic Regression

Similarly logistic regression can not find a single linear decision boundary to separate the 4 XOR outputs.

❌ No straight line can separate the XOR points.

Therefore, a linear model is not sufficient to represent the XOR function.

So, we need more than 1 neuron to solve the XOR problem (because logistic regression is a neural network with a single neuron).

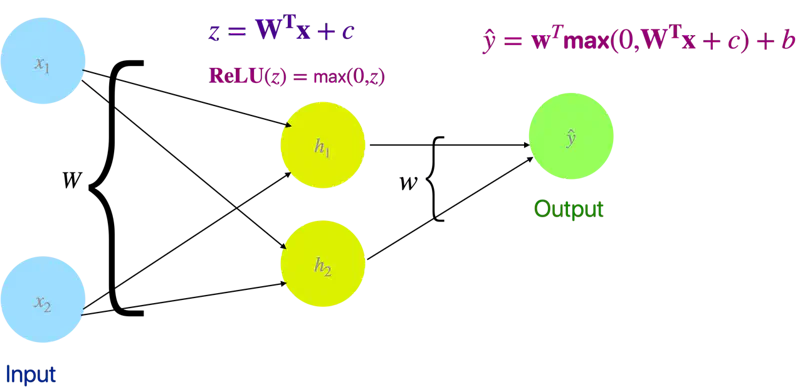

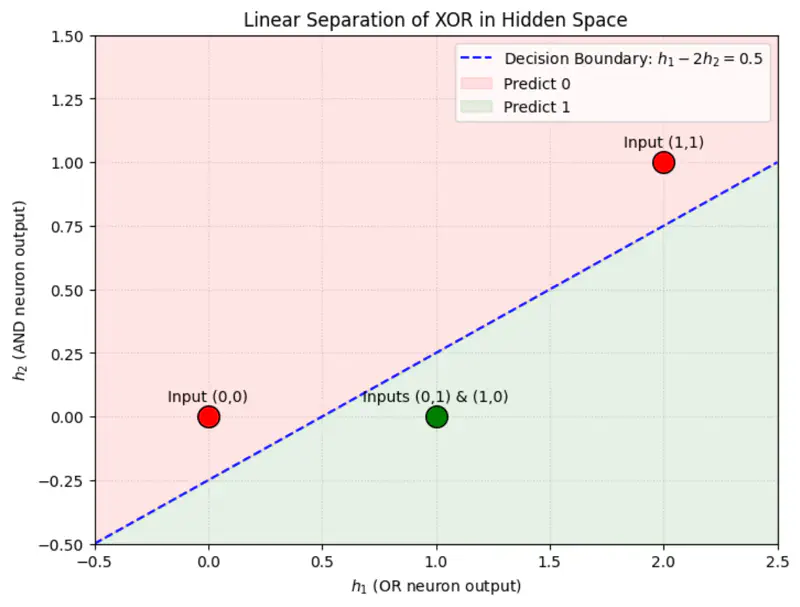

Let’s solve the XOR problem with a simple neural network with 2 neurons (1 hidden layer) and ReLU activation function.

1 Hidden layer and 2 Neurons

We will use linear algebra to demonstrate one of solutions to the problem.

Let input = X and output = Y.

Out of many possible solutions, let’s look at the below solution:

Weight and bias of hidden layer:

Output of hidden layer:

\[z = XW + c\]\[ XW = \begin{bmatrix} 0 & 0 \\ 0 & 1 \\ 1 & 0 \\ 1 & 1 \end{bmatrix} \begin{bmatrix} 1 & 1 \\ 1 & 1 \end{bmatrix} = \begin{bmatrix} 0 & 0 \\ 1 & 1 \\ 1 & 1 \\ 2 & 2 \end{bmatrix} \]\[ z = \begin{bmatrix} 0 & 0 \\ 1 & 1 \\ 1 & 1 \\ 2 & 2 \end{bmatrix} + \begin{bmatrix} 0 & -1 \\ 0 & -1 \\ 0 & -1 \\ 0 & -1 \end{bmatrix} = \begin{bmatrix} 0 & -1 \\ 1 & 0 \\ 1 & 0 \\ 2 & 1 \end{bmatrix} \]Now, lets apply ReLU activation function to the output ‘z’ of the hidden layer:

\[ReLU(z) = \begin{bmatrix} 0 & 0 \\ 1 & 0 \\ 1 & 0 \\ 2 & 1 \end{bmatrix}\]Applying ReLU non-linearity, changes the position of the points in the hidden space and now the points can be separated by a line.

Weight and bias of output layer:

\[\mathbf{w} = \begin{bmatrix} 1 \\ -2 \end{bmatrix}, \quad b = 0\]Output:

\[\hat{y} = \mathbf{w} ~ \text{max}(0, ~ XW + c) + b\]\[\hat{y} = \begin{bmatrix} 0 & 0 \\ 1 & 0 \\ 1 & 0 \\ 2 & 1 \end{bmatrix} \begin{bmatrix} 1 \\ -2 \end{bmatrix} = \begin{bmatrix} 0 \\ 1 \\ 1 \\ 0 \end{bmatrix}\]Therefore, we can see that we have got the expected output for XOR function.

import tensorflow as tf

import numpy as np

# 1. XOR Data

X = np.array([[0, 0], [0, 1], [1, 0], [1, 1]], dtype=np.float32)

y = np.array([[0], [1], [1], [0]], dtype=np.float32)

# 2. Build the Model (2 Hidden Neurons)

model = tf.keras.Sequential([

tf.keras.layers.Dense(2, activation='leaky_relu',

kernel_initializer='he_normal',

input_shape=(2,), name='hidden_layer'),

# Output layer (Linear for MSE)

tf.keras.layers.Dense(1, name='output_layer')

])

print("--- Model Architecture ---")

model.summary()

# 3. Compile

model.compile(optimizer=tf.keras.optimizers.Adam(learning_rate=0.05), loss='mse')

# 4. Train

print("Training XOR Neural Network ...")

model.fit(X, y, epochs=100, verbose=0)

# 5. Extract and Print Final Weights

weights = model.get_weights()

W, c, w_out, b = weights

print("\n--- Final Weights (W) ---")

print(W)

print(f"\nHidden Bias (c): {c}")

print(f"\nOutput Weights (w): \n{w_out}")

print(f"Output Bias (b): {b}")

# 6. Predictions

print("\n--- Final Predictions ---")

preds = model.predict(X)

for i in range(len(X)):

print(f"Input: {X[i]} | Raw Output: {preds[i][0]:.4f} | Rounded: {int(np.round(preds[i][0]))}")

Output:

--- Model Architecture ---

Model: "sequential_19"

┏━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━━━━━━━━┓

┃ Layer (type) ┃ Output Shape ┃ Param # ┃

┡━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━━━━━━━━┩

│ hidden_layer (Dense) │ (None, 2) │ 6 │

├─────────────────────────────────┼────────────────────────┼───────────────┤

│ output_layer (Dense) │ (None, 1) │ 3 │

└─────────────────────────────────┴────────────────────────┴───────────────┘

Total params: 9 (36.00 B)

Trainable params: 9 (36.00 B)

Non-trainable params: 0 (0.00 B)

Training XOR Neural Network ...

--- Final Weights (W) ---

[[-1.1186169 1.8888004]

[ 1.0687382 -1.8048155]]

Hidden Bias (c): [-0.20543203 -0.18170778]

Output Weights (w):

[[1.3961141]

[0.7466789]]

Output Bias (b): [0.0938225]

--- Final Predictions ---

1/1 ━━━━━━━━━━━━━━━━━━━━ 0s 105ms/step

Input: [0. 0.] | Raw Output: 0.0093 | Rounded: 0

Input: [0. 1.] | Raw Output: 1.0024 | Rounded: 1

Input: [1. 0.] | Raw Output: 0.9988 | Rounded: 1

Input: [1. 1.] | Raw Output: 0.0079 | Rounded: 0

3 - Activation Functions

Real-world data (images, speech, text, financial trends) is rarely linear.

Non-linearity allows the network to learn and represent complex mappings between inputs and outputs.

- It enables the network to become a ‘Universal Function Approximator’.

A neural network with following properties can approximate any continuous function.

- at least one hidden layer

- nonlinear activation

🎯 This theorem is the mathematical reason - why neural networks are so powerful.

A deep neural network without any non-linear activation collapses into a single linear layer.

Say, if, f(x) = ax and g(x) = bx,

then, g(f(x)) = g(ax) = (ba)x = cx

where, c = ba (another constant)

Effectively, both the linear functions g(f(x)) can be represented by another single linear function h(x).

❌ So depth becomes useless.

- Sigmoid

- Tanh (Hyperbolic Tangent)

- ReLU (Rectified Linear Unit)

- Leaky ReLU

- Softmax

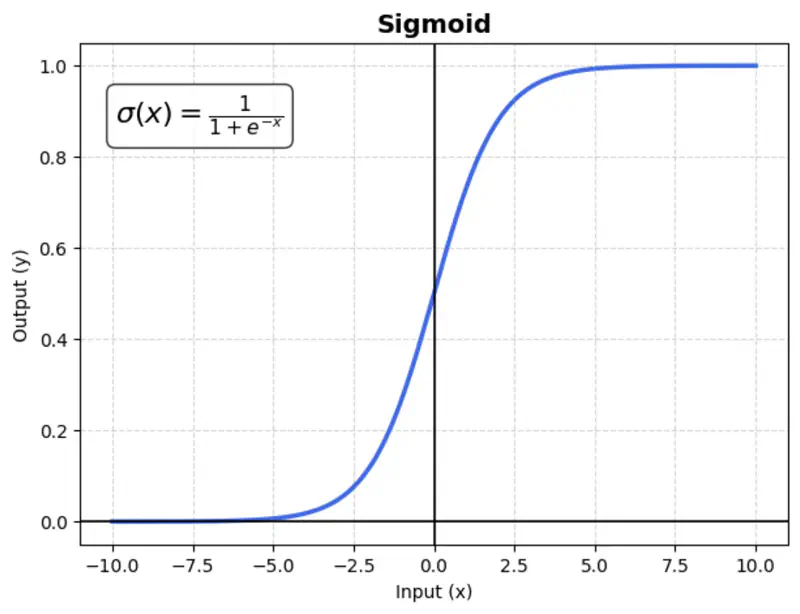

A mathematical function with a characteristic “S”-shaped curve (sigmoid curve) that maps any real-valued number into a range between 0 and 1.

\[\sigma(x) = \frac{1}{1 + e^{-x}}\]Usage:

Mostly used in binary classification output layers.

Issue:

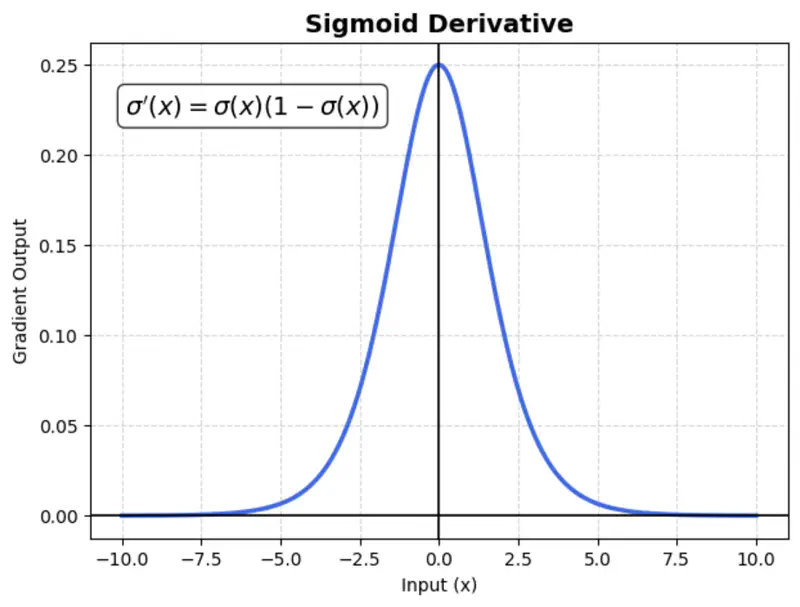

Suffers from vanishing gradient (gradients become near-zero for high or low input values), which slows down training.

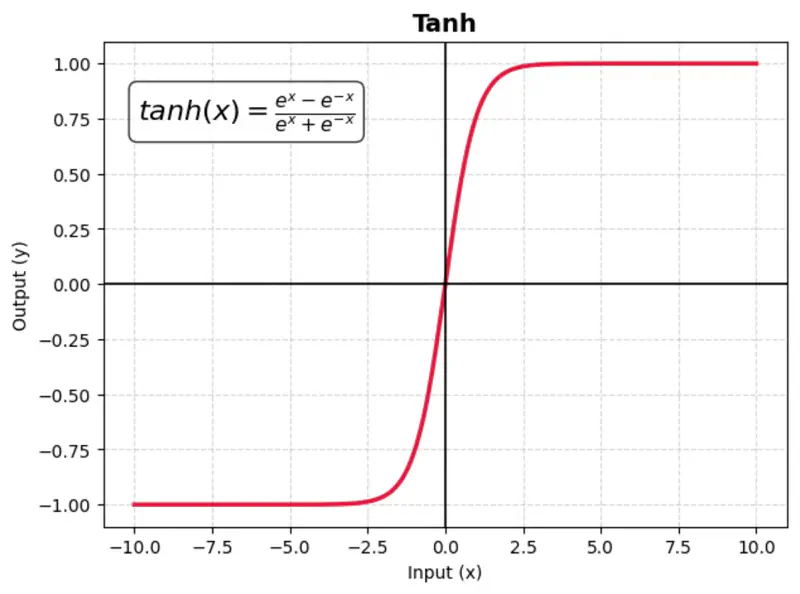

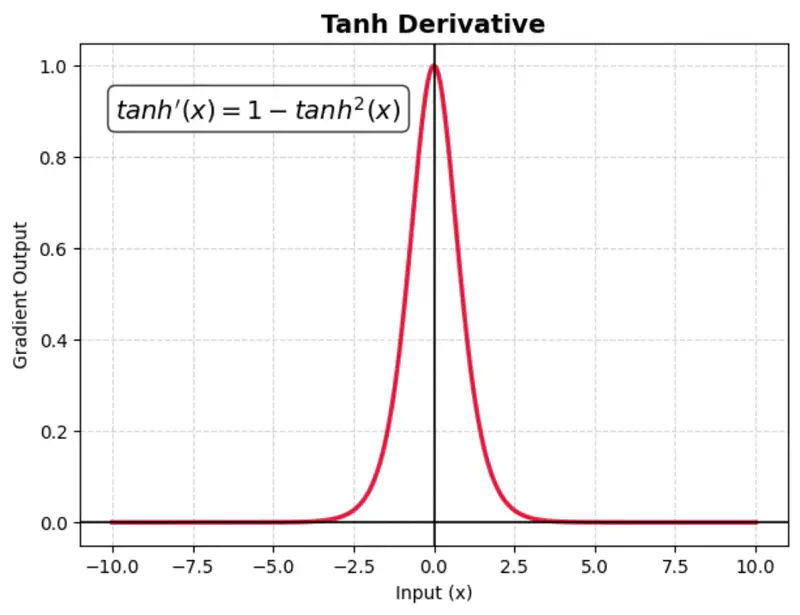

A mathematical function with a S-shaped curve that maps any real-valued number into a range between -1 and 1.

It is zero-centered, making it more effective than the sigmoid function for hidden layers in neural networks.

Benefit:

Makes optimization faster as the data is zero-centered.

Issue:

TanH also suffers from vanishing gradient (gradients become near-zero for high or low input values), which slows down training.

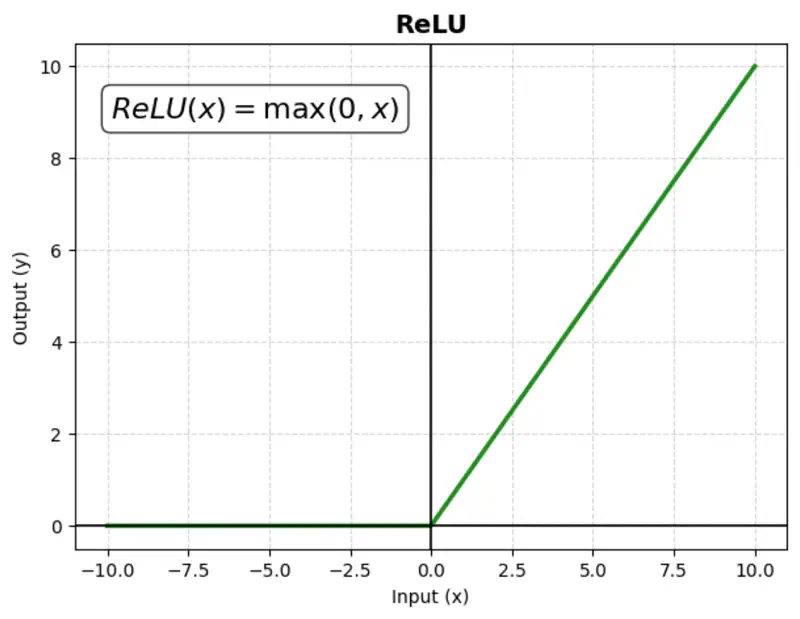

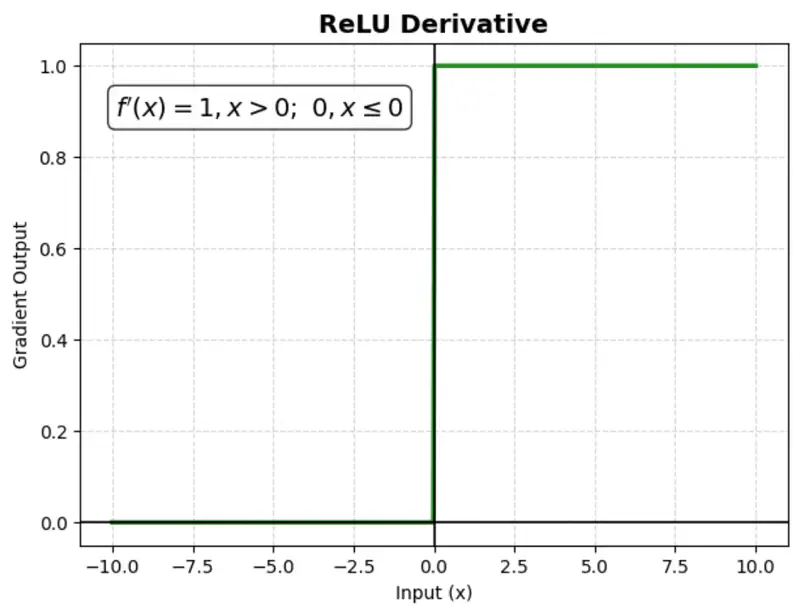

A mathematical function that outputs the input value directly if it is positive, and zero otherwise.

Computationally simple; very fast to compute; does not saturate in the positive direction.

Benefit:

It is computationally efficient and helps mitigate the ‘vanishing gradient’ problem, making it the most popular choice for hidden layers.

Issue:

‘Dying ReLU’ problem: negative inputs result in a zero gradient, meaning the neuron stops learning (no weight updates).

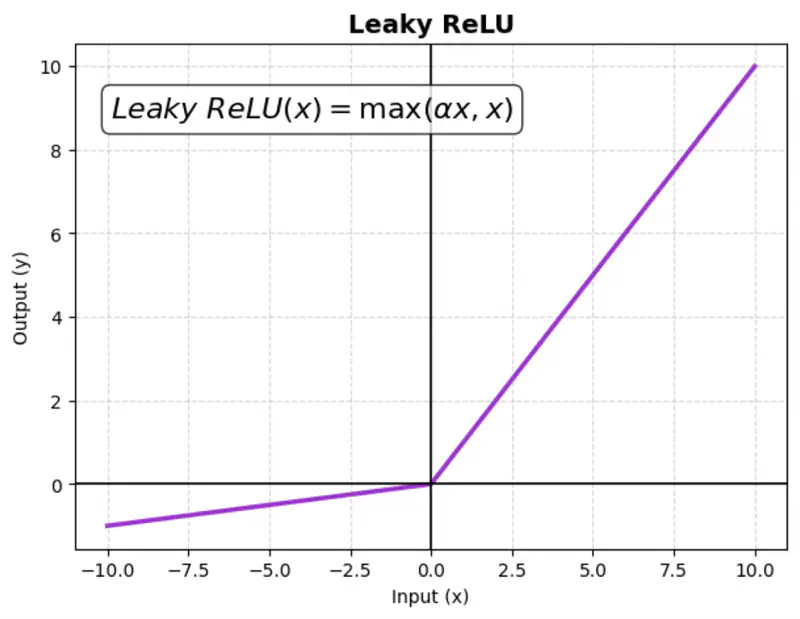

Instead of setting negative input values to zero like a standard ReLU, Leaky ReLU allows a small,

non-zero gradient (slope) for negative values.

This ensures that neurons continue learning (even for negative values).

where ‘\(\alpha\)’ is a small constant (e.g., 0.01)

Benefit:

Fixes the ‘dying ReLU’ problem.

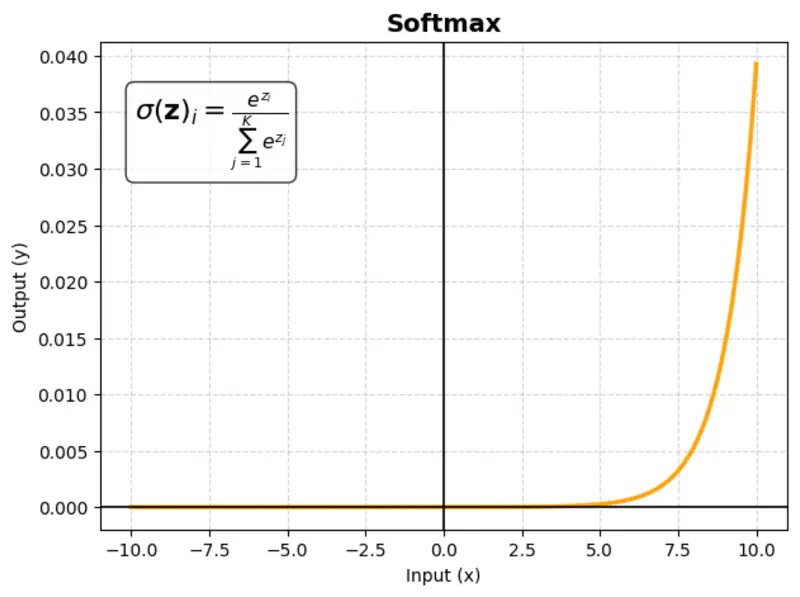

images/deep_learning/fundamentals/activation_function/Leaky_relu_derivative.png in assets/ or Page Bundle.Multivariate activation function that takes a vector of raw scores (logits) and converts them into a probability distribution; sum of probabilities = 1.

\[\sigma(\mathbf{z})_i = \frac{e^{z_i}}{\sum_{j=1}^K e^{z_j}}\]where ‘K’ = number of classes

Usage:

Almost exclusively used in the output layer of multi-class classification networks.

Example:

Consider an AI model reading a product review to categorize the customer’s mood into three classes:

Positive,Neutral, or Negative.

| Class | Score (Logit) | Softmax |

|---|---|---|

| Positive | 3 | 82.1% |

| Neutral | 1 | 11.1% |

| Negative | 0.5 | 6.7% |

Similarly, you can calculate for negative and neutral sentiments.

4 - Optimization Methods

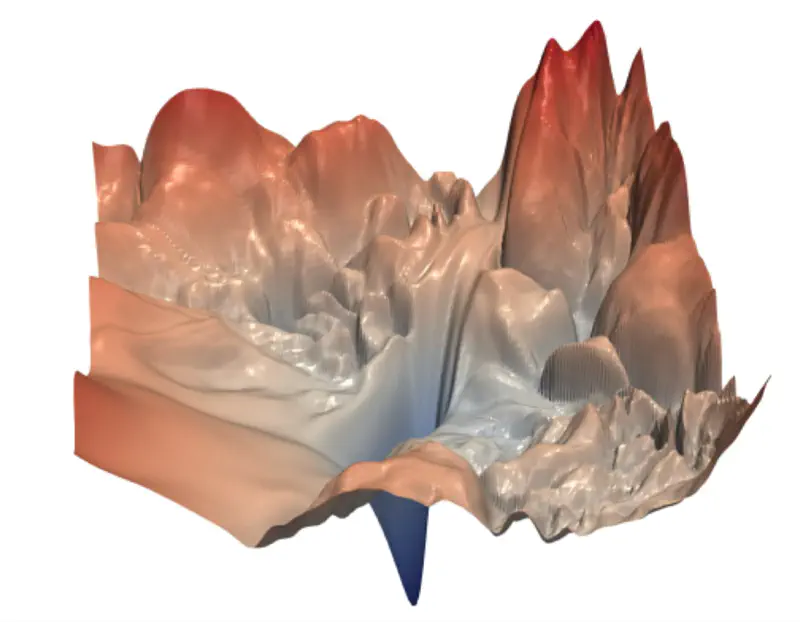

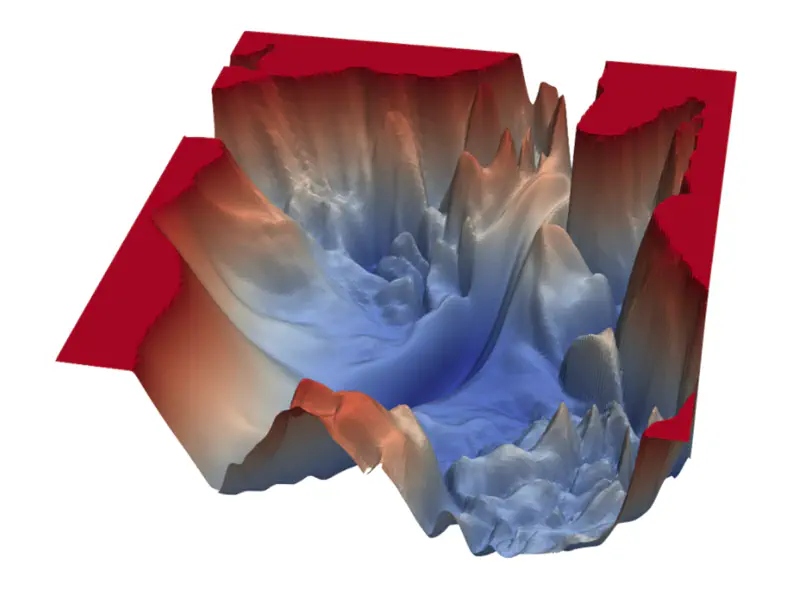

The loss function surface in deep learning is non-convex, i.e, it has multiple local minima, saddle points,

and plateaus rather than a single, global minimum.

So, in the context of neural network training, we usually do not care about finding the exact (global) minimum of a function,

but seek only to reduce its value sufficiently to obtain good generalization error.

🖼️ Non-Convex Loss Surface Examples

Because of the non-convex loss surface, convergence to a good minimum is often slow, because of multiple reasons:

- Multiple local minima; may not land in a good enough local minima.

- Saddle points; near a saddle point, optimizer barely moves.

- Presence of flat regions (plateaus), where the gradient is near zero, offering minimal guidance for the optimizer.

- “Ravine-like” structure (steep on one side, flat on the other), stochastic gradient descent oscillates uncontrollably.

- Different parameters require different learning rates; e.g, sparse parameters will get very few updates.

Training deep neural networks is inherently complex because of the multiple layers and the vast number of parameters to be updated during training.

Therefore, we need to find ways to accelerate the optimization process.

The optimization process can be accelerated considerably by using stochastic gradient descent (instead of simple gradient descent), i.e, follow the gradient of randomly selected mini-batches downhill.

\[w_{new} = w_{old} - \eta.\text{(average gradient of randomly chosen ‘m' data points)}\]where, \(\eta\) = learning rate

In practice, it is common to decay the learning rate linearly until some pre-defined fixed number of iterations ‘\(\tau\)’.

The primary reason for this approach is to start with a high learning rate to rapidly traverse the loss landscape and escape poor local minima, while later using a small learning rate to fine-tune the parameters and settle into a deeper, more stable minimum without oscillating around it.

\[\eta_k = (1-\alpha)\eta_0 + \alpha\eta_{\tau}\]where, \(\alpha = \frac{k}{\tau}\)

After,’\(\tau\)’ iterations, leave the learning rate \(\eta\) constant.

e.g., \(\eta_0 = 0.1,~ \eta_{\tau}=0.01, \text{ and } \tau=100\)

Say, we have ’n’ samples, and we divide them into mini-batches, such that, each mini-batch has ‘m’<’n’ samples.

- 1 iteration = weight update after computing the gradient of 1 mini-batch

- 1 epoch = one complete pass through the entire training dataset = n/m iterations

- L epochs = L x (n/m) iterations

Note:

- Size ‘m’ of a mini-batch is decided based on the computing resources, such as RAM, GPU, TPU etc., e.g, Nvidia H100 GPU has 80GB RAM.

- In practice, the mini-batch size is chosen to be the largest possible power of 2 that fits within the available GPU memory while still allowing for good model performance.

- Samples in the mini-batches are randomized in every epoch.

Methods to accelerate the optimization process in deep learning:

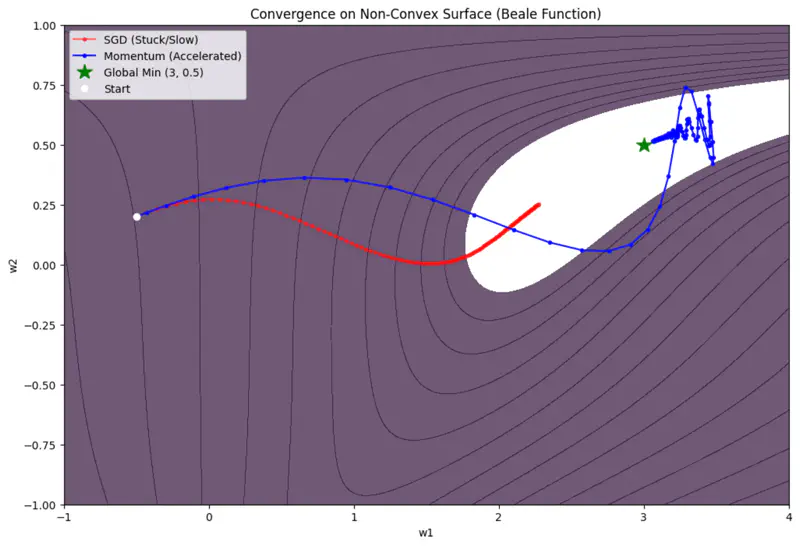

- Momentum Based; Polyak (1964) | Refined for Deep Learning: Sutskever et al. (2013)

- AdaGrad (Adaptive Gradient); Duchi, Hazan, and Singer (2011)

- RMSProp (Root Mean Square Propagation); Geoffrey Hinton (2012)

- Adam (Adaptive Moment Estimation); Kingma and Ba (2014)

💡 Momentum introduces velocity.

(term borrowed from Physics, where momentum = mass x velocity)

‘Accumulates’ velocity in directions of consistent gradients and cancels out directions that fluctuate.

Algorithm

- For each iteration (t):

- Instead of moving purely by gradient: \[w_{t+1} = w_{t} - \eta . g_t\]

- Accumulate previous gradients, i.e, the velocity (speed + direction): \[ v_{t} = \gamma . v_{t-1} + \eta. g_t\]

- where, \( \gamma \) = momentum coefficient (typically 0.9)

- Update parameter: \[ w_{t+1} = w_{t} - v_{t} \]

Size of the step depends on how large and how aligned are a sequence of gradients.

\[ \begin{aligned} \text{Let, } v_0 &= 0 \\ v_1 &= \gamma. v_0 + \eta.g_0 = \eta.g_0\\ v_2 &= \gamma. v_1 + \eta.g_1 = \gamma (\eta.g_0) + \eta.g_1 \\ v_3 &= \gamma. v_2 + \eta.g_2 = \gamma (\gamma (\eta.g_0) + \eta.g_1 ) + \eta.g_2 = \eta(\gamma^2 g_0 + \gamma g_1 + g_2)\\ v_{k} &= \eta(\gamma^{k-1} g_0 + \gamma^{k-2} g_1 + \dots g_{k-1})\\ \end{aligned} \]If many successive gradients point in exactly the same direction, then we want to take larger steps.

\[ \lim_{k\rightarrow \infty} v_k = \eta.g(1+\gamma+ \gamma^2 + \dots \infty) \]The term inside the bracket, is a geometric progression with the common ratio \(\gamma < 1\).

So, if the momentum algorithm always observes gradient ‘g’, then it will accelerate in the direction of ‘g’, until reaching a terminal velocity where the size of each step is:

\[ \frac{\eta. \lVert g \rVert}{1-\gamma} \]where, \(0 < \gamma < 1\)

Say, if \(\gamma\)= 0.9, then it means to multiply the maximum velocity by 10 relative to a gradient descent algorithm.

🖼️ Momentum Based Optimizer Vs SGD

Limitations

- Momentum can be like a heavy ball rolling down a hill; it gathers so much speed that it may overshoot the minima.

- It does not adjust the learning rate based on the importance of specific features.

💡 Scales the learning rate for each parameter based on the historical sum of squares of its gradients.

Problem

In many datasets, some features are frequent while others are sparse.

e.g., Predicting house prices based on certain rare feature, such as, presence of shopping mall.

For most of the houses the value of that feature is 0.

A single learning rate ‘\(\eta\)’ for all parameters is inefficient.

We want larger updates for sparse features and smaller updates for frequent ones.

Algorithm

- For each iteration (t):

- Calculate gradient \(g_t\).

- Accumulate gradients: \[ r_{t} = r_{t-1} + g_t \odot g_t\]

- Update parameter: \[ w_{t+1} = w_{t} - \frac{\eta}{\sqrt{r_t} + \delta} \odot g_t \]

- where, \(\delta\) is small smoothing term (e.g. \(10^{-8}\)) to avoid division by 0.

- if, \(g = \begin{bmatrix} g_1 \\ g_2 \\ \vdots \\ g_d \end{bmatrix} \), then \( g \odot g = \begin{bmatrix} g_1^2 \\ g_2^2 \\ \vdots \\ g_d^2 \end{bmatrix} \) (element wise dot product)

Since, \(r_{t+1} = r_{t} + g_t \odot g_t\), so, for sparse features, we hardly get any gradient updates, so ‘g’ is mostly 0.

Therefore, accumulations ‘r’ is very small.

Since, \(w_{t+1} = w_{t} - \frac{\eta}{\sqrt{r_t} + \delta} \odot g_t\), this implies that, the learning rate is inversely proportional to accumulations ‘r’.

Therefore, sparse features get larger updates, whereas, for weights that are frequent will have very large accumulations,

as a result, the learning rate will start decaying.

Limitation

Vanishing Learning Rate:

Since accumulation of gradients increases monotonically.

This causes the effective learning rate to shrink until it becomes infinitesimally small, effectively ‘killing’

the learning process before the model converges.