Conditional Probability

3 minute read

It is the probability of an event occurring, given that another event has already occurred.

Allows us to update probability when additional information is revealed.

\(P(A \cap B) = P(A)*P(B \mid A)\)

- Roll a die, sample space: \(\Omega = \{1,2,3,4,5,6\}\)

Event A = Get a 5 = \(\{5\} => P(A) = 1/6\)

Event B = Get an odd number = \(\{1, 3, 5\} => P(B) = 3/6 = 1/2\)

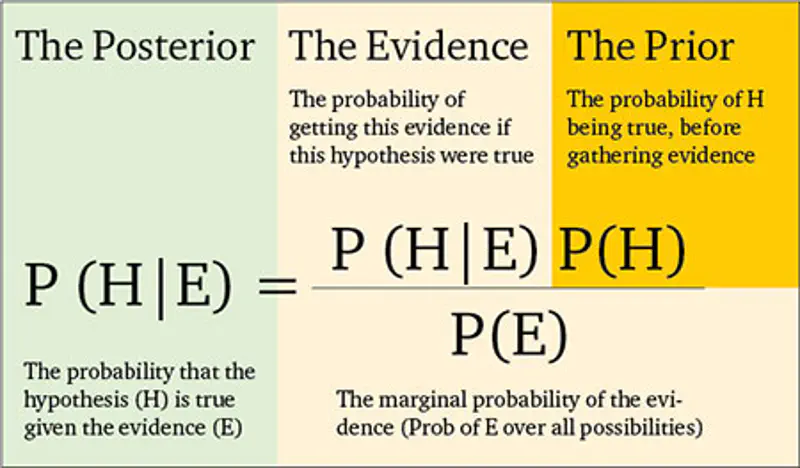

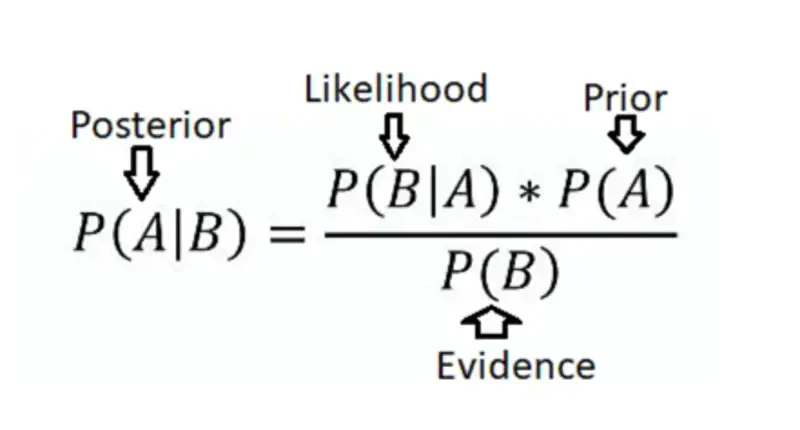

It is a formula that uses conditional probability.

It allows us to update our belief about an event’s probability based on new evidence.

We know from conditional probability and chain rule that:

Combining all the above equations gives us the Bayes’ Theorem:

$$ \begin{aligned} P(A \mid B) = \frac{P(A)*P(B \mid A)}{P(B)} \end{aligned} $$

- Roll a die, sample space: \(\Omega = \{1,2,3,4,5,6\}\)

Event A = Get a 5 = \(\{5\} => P(A) = 1/6\)

Event B = Get an odd number = \(\{1, 3, 5\} => P(B) = 3/6 = 1/2\)

Task: Find the probability of getting a 5 given that you rolled an odd number.

\(P(B \mid A) = 1\) = Probability of getting an odd number given that we have rolled a 5.

Now, let’s understand another concept called Law of Total Probability.

Here, we can say that the sample space \(\Omega\) is divided into 2 parts - \(A\) and \(A ^ \complement \)

So, the probability of an event \(B\) is given by:

\[ B = B \cap A + B \cap A ^ \complement \\ P(B) = P(B \cap A) + P(B \cap A ^ \complement ) \\ By ~Chain ~Rule: P(B) = P(A)*P(B \mid A) + P(A ^ \complement )*P(B \mid A ^ \complement ) \]Overall probability of an event B, considering all the different, mutually exclusive ways it can occur.

If A₁, A₂, …, Aₙ are a set of events that partition the sample space, such that they are -

- Mututally exclusive : \(A_i \cap A_j = \emptyset\) for all \(i, j\)

- Exhaustive: \(A₁ \cup A₂ ... \cup Aₙ = \Omega\) for all \(i \neq j\) \[ P(B) = \sum_{i=1}^{n} P(A_i)*P(B \mid A_i) \] where \(n\) is the number of mutually exclusive partitions of the sample space \(\Omega\) .

Now, we can also generalize the Bayes’ Theorem using the Law of Total Probability.

End of Section