Attention

2 minute read

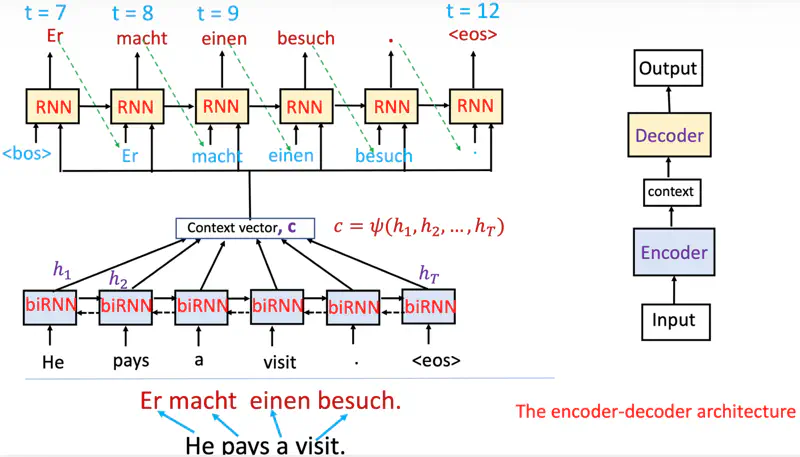

Entire input (\(h_1, h_2, \dots, h_T\) ) is encoded into one fixed-length vector.

So, as the length of an input sentence increases performance of a basic encoder–decoder deteriorates rapidly,

because of vanishing gradient problem.

Read more about Vanishing Gradient Problem

Encoder-Decoder Architecture (RNN)

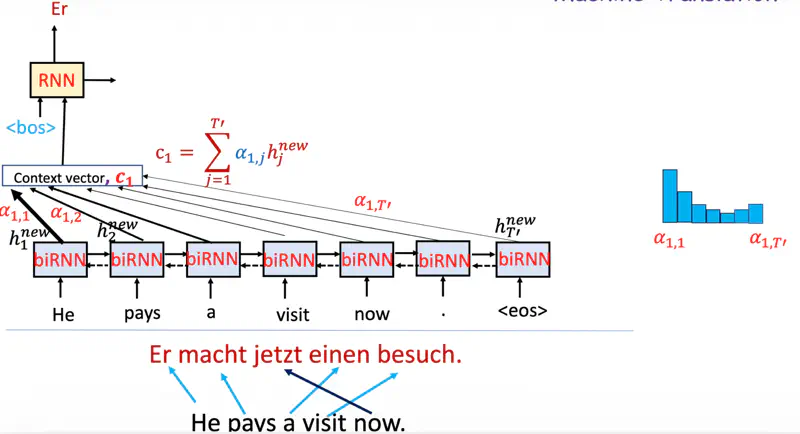

Decoder decides parts of the source sentence to pay attention to.

By letting the decoder have an attention mechanism, we relieve the encoder from the burden of having to

encode all information in the source sentence into a fixed-length vector.

While decoding an output pay attention to only relevant inputs.

Decoder Context

Note: Now the decoder context is not fixed.

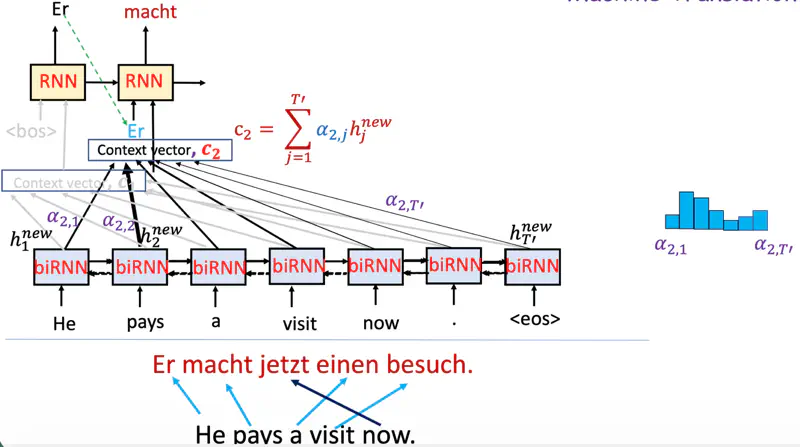

While predicting the next word, the decoder (instead of relying only on the final encoder hidden state)

dynamically pays attention to only relevant context from encoder at that time step.

Why it matters?

This allows the decoder to “look back” at the entire input sequence, preventing the information loss that occurs

in basic encoder-decoder models when handling long sentences.

Research Paper: Neural Machine Translation by Jointly Learning to Align and Translate ; Dzmitry Bahdanau, Kyunghyun Cho, Yoshua Bengio, 2014, https://arxiv.org/pdf/1409.0473