Tokenization

5 minute read

Text is split by whitespace or punctuation.

Each unique word gets its own ‘ID’ in the vocabulary.

e.g., “The player is playing”, becomes:

[“The”, “player”, “is”, “playing”]

Issues

- Out-Of-Vocabulary (OOV): If the model has seen only “player” in the training set and at runtime it sees “players” or “playful”, then it marks them as

(Unknown). - Vocabulary Explosion: If we use full words then vocabulary size.

- No Shared Meaning: The model treats “running” and “run” as completely unrelated symbols, even though they share the same root.

Text is split into individual letters or symbols.

e.g., “Apple”, becomes:

[“A”, “p”, “p”, “l”, “e”]

Issues

- Loss of Semantics: Individual characters like “a” or “p” carry no inherent meaning.

- The model has to work much harder to learn that the sequence “A-p-p-l-e” represents a fruit.

- Long Sequences: A single sentence becomes a massive string of tokens.

- Computationally Expensive: Longer sequences require more memory and processing power to calculate relationships between tokens.

A fixed-size vocabulary that can represent any word by breaking it into meaningful sub-units.

e.g., “unhappiness”, becomes:

[“un”, “happi”, “ness”]

Benefits

- Small vocabulary size.

- No OOV (everything decomposable).

- Better generalization.

Comparison: Word-Level, Character-Level & Sub-Word Tokenization

| Feature | Word-Level | Char-Level | Sub-Word |

|---|---|---|---|

| Vocab Size | Very Large | Small | Medium (Fixed) |

| OOV Problem | Severe | None | None |

| Meaning (Per Token) | High | Low | High (Morpheme-based) |

| Sequence Length | Small | Very High | Balanced |

Originally a data compression algorithm, Byte Pair Encoding (BPE) was adapted for NLP to iteratively merge the most frequent adjacent pairs of characters into new tokens.

BPE Algorithm

- Initialization: Prepare a corpus and define a target vocabulary size ‘’. Break every word into individual characters (plus a special end-of-word symbol ).

- Frequency Count: Count all adjacent pairs of symbols in the corpus.

- Merge: Identify the pair  with the highest frequency and merge them into a single new symbol .

- Repeat: Update the corpus with the new symbol and repeat until the vocabulary reaches size ‘’ or no more merges are possible.

Example

--- Initial Word Corpus ---

Word Count

------------------ -------

l o w </w> 5

l o w e r </w> 2

n e w e s t </w> 6

w i d e s t </w> 3

h i g h e s t </w> 2

Iteration 0

| Token | Frequency |

|---------+-------------|

| e | 19 |

| </w> | 18 |

| w | 16 |

| s | 11 |

| t | 11 |

| l | 7 |

| o | 7 |

| n | 6 |

| i | 5 |

| h | 4 |

Action: Merge ('e', 's')

Iteration 1

| Token | Frequency |

|---------+-------------|

| </w> | 18 |

| w | 16 |

| es | 11 |

| t | 11 |

| e | 8 |

| l | 7 |

| o | 7 |

| n | 6 |

| i | 5 |

| h | 4 |

Action: Merge ('es', 't')

Iteration 2

| Token | Frequency |

|---------+-------------|

| </w> | 18 |

| w | 16 |

| est | 11 |

| e | 8 |

| l | 7 |

| o | 7 |

| n | 6 |

| i | 5 |

| h | 4 |

| d | 3 |

Action: Merge ('est', '</w>')

Iteration 3

| Token | Frequency |

|---------+-------------|

| w | 16 |

| est</w> | 11 |

| e | 8 |

| </w> | 7 |

| l | 7 |

| o | 7 |

| n | 6 |

| i | 5 |

| h | 4 |

| d | 3 |

Action: Merge ('l', 'o')

Iteration 4

| Token | Frequency |

|---------+-------------|

| w | 16 |

| est</w> | 11 |

| e | 8 |

| </w> | 7 |

| lo | 7 |

| n | 6 |

| i | 5 |

| h | 4 |

| d | 3 |

| g | 2 |

Action: Merge ('lo', 'w')

Iteration 5

| Token | Frequency |

|---------+-------------|

| est</w> | 11 |

| w | 9 |

| e | 8 |

| </w> | 7 |

| low | 7 |

| n | 6 |

| i | 5 |

| h | 4 |

| d | 3 |

| g | 2 |

OOV Handling Example

Original OOV: 'lowest'

BPE Segmented: ['low', 'est</w>']

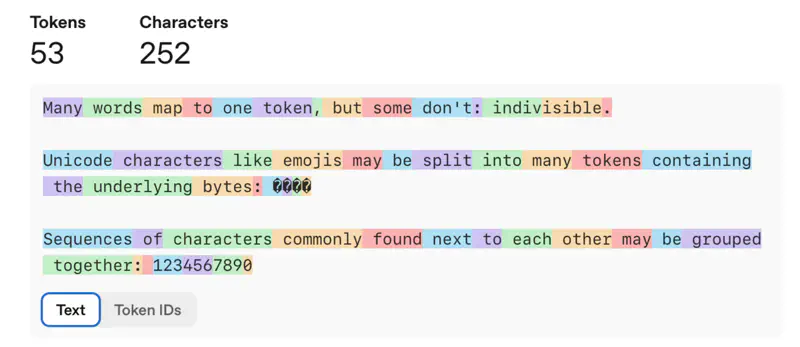

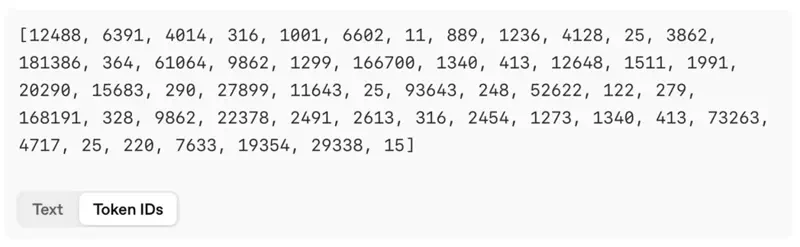

OpenAI Tokenizer

WordPiece merges the pair that maximizes the likelihood of the training data.

It chooses the pair\((s_1, s_2)\) that maximizes:

- \(P(s_1, s_2)\): probability of the pair appearing together.

- \(P(s_1)P(s_2)\): probability of the pair appearing independently.

e.g: Is “unfriendly” in vocabulary? No;

Is “un” + “##friend” + “##ly” available? Yes.

where, “##” means continuation of the previous token.

Note: WordPiece is used in BERT.

SentencePiece is not just a new algorithm but a subword regularization framework that treats the input as a raw stream of characters, including spaces.

Key Innovations:

- Space as a Symbol:

- It treats whitespace as a normal character (represented as “underscore”).

- This makes it “lossless”; you can reconstruct the exact original string from the tokens.

- Algorithm Agnostic:

- It can implement both BPE and Unigram Language Model (a probabilistic approach that removes tokens that least impact the overall likelihood of the corpus).

- No Pre-tokenization:

- Unlike BPE/WordPiece, which often require a preliminary step to split text by whitespace, SentencePiece works directly on raw Unicode strings.

- This is vital for languages like Chinese or Japanese that do not use spaces between words.